Chapter 2

Goggles, Gloves, and CAVEs: The Technology of Virtual Reality

If viewers could look inside Star Trek's Holodeck, they would probably find advanced versions of the same kinds of equipment and software that virtual reality systems contain today. The heart of the system would surely be a powerful computer with programs that produce complex, three-dimensional images. Devices would send information from the computer to most or all of the crew members' senses: sight, hearing, touch, and probably smell and even taste. Other devices would pick up information about the members' body and hand movements, voices, and perhaps even thoughts, and would convey this information to the computer.

Drawing Virtual Pictures

The best virtual reality systems—the ones owned by large universities and corporations—use expensive supercomputers with special graphics capability, such as the Silicon Graphics Onyx series. Many home computers can create more limited versions of virtual reality with software such as QuickTime VR. Video game consoles like the Sony PlayStation 2 and Microsoft Xbox, which are a form of computer, also produce convincing three-dimensional graphics, stereo sound, and sometimes limited touch sensations as well. Neither they nor home computers, however, create the feeling of being completely immersed in a virtual world that systems with large screens can produce.

A computer that creates virtual reality needs tremendous processing power because the work it must do is so challenging. First, the computer must draw, or render, complex, realistic-looking graphics. Software usually builds these graphics from combinations of polygons, which are two-dimensional geometric shapes with three or more straight sides. Polygons are even used to make curved shapes (which consist of many small polygons attached at very small angles) because computers can draw polygons much more easily than they can draw free-form shapes. The more polygons an image contains, the more realistic it will look, and the longer it will take to draw. A single landscape may contain thousands of polygons.

The computer graphics software and processor combine the polygons into frames or skeletons that look as if they are made out of wire, then add textures and colors. Instead of drawing the textures a bit at a time, programs often use texture "maps" made from photographs of real materials or objects, such as rocks. They overlay the maps on the frames as if covering them with wallpaper. Shading and lighting effects complete the illusion of three dimensions.

Rendering a detailed, three-dimensional picture even once is hard enough. A computer used for virtual reality, however, must do this task over and over—between twenty and thirty times a second—because the display on a computer monitor constantly fades out and must be restimulated, or refreshed. A person's eye holds the impression of an image for about a tenth of a second, so the screen must be refreshed more often than this or else it will seem to flicker. (For the same reason, film moves through a movie projector at a speed of thirty frames a second so that people will see motion rather than a "slide show" of still photos.) In reproducing a complex picture thirty times, a computer has to draw tens of millions of polygons each second.

Communicating with Users

A computer running a virtual reality system also must change its display when users turn their heads or move their hands—and must do so without a delay that the users can notice. If there is a lag, people using the program may feel disoriented or even suffer simulator sickness, a reaction much like seasickness or airsickness. People have sensors in their inner ears, bones, muscles, joints, and skin that help their bodies maintain balance and keep track of their position. If these sensors receive conflicting information, or information that disagrees with what the eyes seem to be seeing, the person is likely to feel dizzy and nauseated. This can happen when the head turns and the view of a virtual landscape changes more slowly than the person would expect the view of a real landscape to change. It can also happen when a person's body senses that it is standing still, yet the surroundings seem to be moving.

The computer receives information about the user's actions from sensors or trackers attached to the head, hands, or other parts of the body. These sensors detect movement by measuring changes in a magnetic field, ultrasound waves, or light-emitting or reflecting material. Sensors on the head, mounted in a helmet or a pair of glasses or goggles, tell the computer the direction in which the user is looking. Sensors in gloves tell how much the fingers bend and where they point. Sensors on the body show how the user moves within a room. Sensors in tools such as wands, three-dimensional mice, and force balls (ball-shaped devices, mounted on a platform, which can be pushed, pulled, or twisted in place to move a cursor in three dimensions) let the user select an object in the virtual display and tell the computer how and where to move it. The most elaborate sensors reveal movement in all of what are called the six degrees of freedom: movement along three axes (left-right, or X axis; up-down, or Y axis; and forward-backward, or Z axis) and three forms of twisting around each axis (roll, pitch, and yaw).

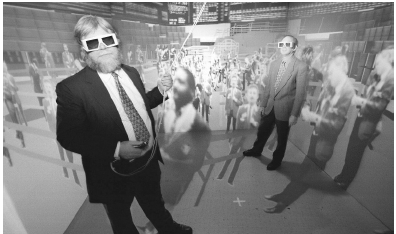

The computer, in turn, sends information to the user's senses. It most often communicates with vision through special glasses. With some virtual reality programs, including those that run on home computers, users wear glasses that are descendants of the old cardboard-and-plastic ones given to audiences watching 3-D movies in the 1950s. The two lenses in these glasses are either different colors or contain filters that line up light waves in different directions, or polarities. The computer monitor showing the VR program, like the old 3-D movie screens, shows overlapping images in the two colors or polarities, and the brain combines them.

Another type of VR glasses, called shutter glasses, were first developed in the 1980s. The lenses of these glasses are liquid-crystal displays that can be made either clear or opaque by changes in electric current. The monitor screen switches rapidly between left-eye and right-eye views of objects or scenes, and impulses from the computer clear and darken the lenses at the same rate, usually thirty to sixty times a second. As a result, the left eye always sees one view and the right eye sees the other. As with other stereoscopic vision devices, the brain combines the two images into a single one that appears three-dimensional. A beam of infrared light passing between the computer display and the glasses synchronizes the two. Shutter glasses are probably the most common way of showing 3-D visual effects today. Some companies sell inexpensive shutter glasses that work with home computers.

Sound, Touch, and Smell

The computer in a virtual reality system uses sound as well as vision to create the illusion of a three-dimensional environment. Like the eyes, a person's two ears receive slightly different messages because they have different positions on the head. The brain uses differences in the timing, loudness, and pitch of sounds to locate objects in space. Room-sized VR systems send messages to the ears through two or more stereophonic speakers in different parts of the room. Systems featuring head-mounted displays use tiny speakers in the HMD's helmet. The computer changes the signals coming through these speakers to reflect the user's movements. For instance, if a user turns away from a rushing river in a virtual display, the computer will make the sound of the water seem to move from the front to the side of the user's head.

Many virtual reality systems include gloves or other devices that convey a sense of touch. These tools are called haptic , from a Greek word meaning "touch." Force feedback, which uses motors to provide resistance to hand movements, is the most common way of transmitting touch sensations. Some home VR and video game systems use force feedback, for example, to suggest the resistance and vibration of the steering wheel when the user drives a virtual racing car.

A penlike, or stylus, device called Phantom, which is often part of the virtual reality design systems that some businesses use, employs force feedback in a more complex way. For instance, when two people using Phantoms in different locations are linked by a computer network, the stylus can make one person's finger follow a path traced by the other.

In elaborate VR systems, a computer can transmit touch sensations to a whole hand or arm by means of a framework or exoskeleton. One device of this kind is called CyberGrasp. CyberGrasp's exoskeleton fits over a glove that contains twenty flexible sensors. These sensors pick up complex data about the hand's motion and send it to the computer. The computer translates the information into a picture of a virtual hand making the same motions and shows the hand on its monitor. At the same time, it sends signals to motors that roll or unroll cables in different parts of the framework. The cables push or pull the parts of the framework, thereby conveying sensation to the fingers. In June 2001, Time International reporter Adi Ignatius described using CyberGrasp:

On a computer screen a 3-D image of a ball appears as well as a representation of my hand, which I control by moving the big, spiderlike exoskeleton I'm wearing. As I manipulate the ball, the fingertips of the CyberGrasp sense the force feedback via a network of artificial tendons. I 'feel' the ball as I bat it through cyberspace. There are flaws: the hand sometimes goes through the object. But it's a thrill touching something that isn't there. 7

A few experimental systems have even added smell to virtual reality displays. One called iSmell mixes chemicals from a "scent cartridge" to produce smells ranging from cotton candy to ocean breezes, much as painters can mix, say, yellow and blue pigments together to make green. So far, however, smell-producing technology has not been very convincing, and there has been little demand for it. Inventors have not even tried (yet) to put taste sensations into virtual reality.

Head-Mounted Displays

Two main types of virtual reality systems are used today. One type, descended from pilot-trainer helmets and Ivan Sutherland's Sword of Damocles, features a head-mounted display. HMDs are far less bulky now than they were in virtual reality's early days, although some still cause neck and back pain if worn too long. HMDs that use cathode-ray tube screens provide some of the most convincing virtual reality displays, but they are expensive. Displays using liquid crystals, descendants of Michael McGreevy's VIVED and Scott Fisher's VIEW, are less costly, but they are also less sharp and clear. HMDs are often used with gloves or other devices that send messages to and from parts of the body other than the head.

One type of HMD delivers what is called augmented reality. A modified form of the heads-up displays developed for pilots in the 1960s, augmented reality headsets use combinations of prisms and lenses to reflect computer-generated images into the user's eyes in a way that makes the semitransparent images seem to float above real objects. A surgeon wearing this kind of headset, for instance, might see an X-ray or an ultrasound image of part of a patient's body placed over a view of the actual patient. Instead of being distracted by having to look back and forth between the patient and a monitor showing the image, the surgeon can see both at the same time. One problem with augmented reality headsets, however, is that their displays can be hard to read in bright light, just as the tiny LCD screens on digital cameras are often hard to see on a sunny day. Today, most augmented reality systems are still experimental, but they are beginning to be tested for commercial use.

CAVEs

Around 1990, Thomas DeFanti, then a researcher at the Electronic Visualization Laboratory at the University of Illinois at Chicago, got the idea for the second main type of virtual reality system while trying on a suit in front of a triple mirror in a clothing store's dressing room. Looking at the three reflected images of himself, he pictured a system that would place multiple images on the walls and even perhaps the floor and the ceiling of a small room, creating a virtual environment that completely surrounded its viewers. (Ivan Sutherland, too, had imagined that his ultimate display would fill a room.) DeFanti, Carolina Cruz-Neira, and Daniel Sandin built the first room-sized virtual reality system in 1991. Recalling the metaphor that Plato had used long ago, they called it the Cave Automatic Virtual Environment, or CAVE.

The first CAVE was a cube-shaped room measuring ten feet on each side. Rear projection units showed pictures on three of its four walls, and an overhead projector placed another image on the floor. "Unlike users of the video-arcade [head-mounted display] type of virtual reality system," Sandin and DeFanti wrote, "CAVE 'dwellers' do not need to wear helmets, which would limit their view of and mobility in the real world." 8 Instead, people in the CAVE wore lightweight shutter glasses, which an infrared beam synchronized with the changing computer display. The glasses contained tracking sensors that told the computer where the wearers were standing and where they were looking. The people moved objects in the display with control wands.

The CAVE proved so appealing that researchers and inventors created many variations of it. These CAVE-type systems immerse their users in the virtual experience more completely than any other kind of VR environment. (Some CAVEs have ripped projection screens because people have been so convinced by the systems' illusion that they literally walked into the room's walls.) They let people move naturally within the environment, freed from

The main drawback of CAVEs is that they are very expensive. A complete CAVE system can cost a million dollars or more. As a result, only a few large universities and wealthy corporations have them. In the hope of bringing CAVE-type systems to more people, inventors have created simpler and less costly versions that have some of the same advantages. One, the PlatoCAVE, projects an image on only one wall. Another form, the RAVE (Reconfigurable Advanced Visualization Environment), can be taken apart and moved to different locations. It has three eight- to ten-foot screens that may be used separately or combined in various ways.

The group who invented the CAVE also created a smaller version, which they call the ImmersaDesk. The ImmersaDesk is the size of a large desk or drafting table and contains a single large screen. When a viewer wearing shutter glasses faces the desk, he or she sees a three-dimensional image that appears to rise above the desk.

Beyond HMDs and CAVEs

A new type of VR system, sometimes called artificial reality, combines some features of both HMD and CAVE displays. It blends live action with computer graphics. In one such system, called LiveActor, the user wears a suit containing thirty sensors that pick up the motion of different parts of the body. As the user moves in a CAVE-like room about ten feet by twenty feet, the computer makes a character in the projected virtual environment carry out the same actions. Artificial reality has been used to create artworks in which viewers in effect become part of the display. It has also let users "participate" in sports ranging from boxing to golf, either for training purposes or just for fun.

Convincing as the best VR systems can be, most observers agree that virtual reality technology has a long way to go before it can produce displays as good as those on the Holodeck. VR devices are often unreliable and uncomfortable to use. Stand-alone VR systems also are still very expensive: according to CyberEdge Information Services, which reports regularly on the industry, the average price of such a system worldwide was about $142,000 in 2002. Nonetheless, more and more people in education, science, industry, business, and entertainment are finding new and exciting uses for virtual reality.

Comment about this article, ask questions, or add new information about this topic: