Chapter 2

Mind Versus Metal

The activities that kindergarten-age children perform effortlessly, like knowing the difference between a cup and a chair, or walking from one room to the next without bumping into the wall, were not thought of as intelligent behavior or worthy of study by traditional AI researchers. But when traditional systems did not perform as they had expected, experts in AI began to wonder what intelligence really meant. They also began to think about different ways to show intelligence in a machine. Although the definition of intelligence is still debated today, scientists understand that intelligence is more than the sum of facts a person knows; it also derives from what a person experiences and how a person perceives the world around him or her. Neil Gershenfeld, author of When Things Start to Think , believes that "we need all of our senses to make sense of the world, and so do computers." 5 As ideas about human intelligence changed, so did approaches to creating artificial intelligence.

In the 1980s AI experts working in robotics began to realize that the simple activities humans take for granted are much more difficult to replicate in a machine than anyone thought. As expert AI researcher Stewart Wilson of the Roland Institute in Cambridge explains:

AI projects were masterpieces of programming that dealt with various fragments of human intelligence.…But they were too specialized.…They couldn't take raw input from the world around them; they had to sit there waiting for a human to hand them symbols, and they then manipulated the symbols without knowing what they meant. None of these programs could learn from or adapt to the world around them. Even the simplest animals can do these things, but they had been completely ignored by AI. 6

Breaking away from traditional AI programming, some researchers veered off to study the lower-level intelligence displayed by animals. One such person is Rodney Brooks, the director of the Computer Science and Artificial Intelligence Laboratory at the Massachusetts Institute of Technology (MIT).

Insect Intelligence

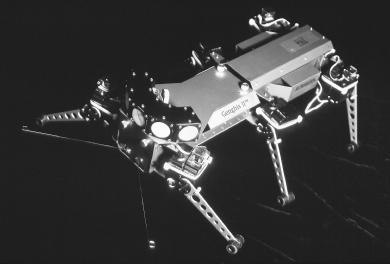

Brooks started at the bottom of the evolutionary ladder, with insects, which were already capable of doing what the most sophisticated AI machines could not do. Insects can move at speeds of a meter or more per second, avoid obstacles in their path, evade predators, and seek out mates and food without having to

have a mental map as Shakey the robot did. Instead of preprogramming behaviors, Brooks programmed in less information, just enough to enable his AI robots to adapt to objects in their path. He felt that navigation and perception were key to mastering higher-level intelligence. This trend became known as the bottom-up approach, in contrast to the top-down approach of programming in all necessary information.

Brooks's focus was creating a machine that could perceive the world around it and react to it. As a result, he created Genghis, a six-legged insectlike robot. According to Brooks, "When powered off, it sat on the floor with its legs sprawled out flat. When it was switched on, it would stand up and wait to see some moving infrared source. As soon as its beady array of six sensors caught sight of something, it was off." 7 Its six sensors picked up on the heat of a living creature, such as a person or a dog, and triggered the stalking mode. It would scramble to its feet and follow its prey, moving around furniture and climbing over obstacles to keep the prey in sight.

Brooks's machine could "see" and adapt to its environment, but it could not perform higher-level intelligent behaviors at the same time. A man, for example, can make a mental grocery list while he is walking down the street to the store; a woman can carry on a conversation with a passenger while driving safely down the road and looking for an address. Researchers began to ask, how is the human brain able to perform so many tasks at the same time?

How the Brain Works

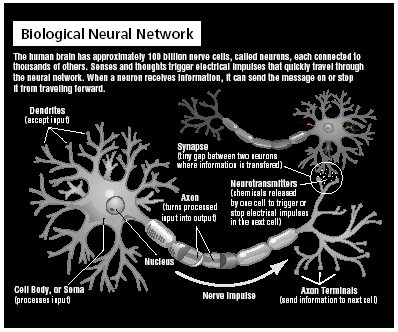

The human brain has close to 100 billion nerve cells, called neurons. Each neuron is connected to thousands of others, creating a neural network that shuttles information in the form of stimuli, in and out of the brain constantly.

Each neuron is made up of four main parts: the synapses, soma, axon, and dendrites. The soma is the

body of the cell where the information is processed. Each neuron has long, thin nerve fibers called dendrites that bring information in and even longer fibers called axons that send information away. The neuron receives information in the form of electrical signals from neighboring neurons across one of thousands of synapses, small gaps that separate two neurons and act as input channels.

Once a neuron has received this charge it triggers either a "go" signal that allows the message to be passed to the next neuron or a "stop" signal that prevents the message from being forwarded. When a person thinks of something, sees an image, or smells a scent, that mental process or sensory stimulus excites a neuron, which fires an electrical pulse that shoots out through the axons and fires across the synapse. If enough input is received at the same time, the neuron is activated to send out a signal to be picked up by the next neuron's dendrites. Most of the brain consists of the "wiring" between the neurons, which makes up one thousand trillion connections. If these fibers were real wire, they would measure out to an estimated 63,140 miles inside the average skull.

Each stimulus leads to a chain reaction of electrical impulses, and the brain is constantly firing and rewiring itself. When neurons repeatedly fire in a particular pattern, that pattern becomes a semipermanent feature of the brain. Learning comes when patterns are strengthened, but if connections are not stimulated, they are weakened. For example, the more a student repeats the number to open a combination lock, the more the connections that take in that information are bolstered to create a stronger memory that will be easily retrieved the next time. At the end of the school year, when a student puts the lock away, that number will not be used for a couple of months. Those three numbers will be much harder to recall when fall comes and that student needs to open the lock again.

Artificial Neural Networks

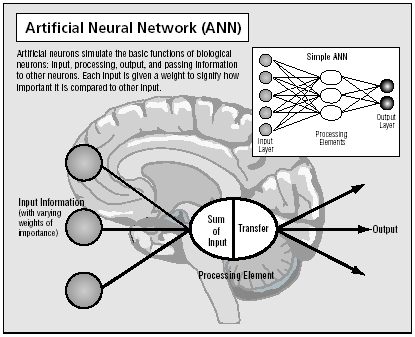

The branch of AI that modeled its work after the neural network of the human brain is called connectionism. It is based on the belief that the way the brain works is all about making the right connections, and those connections can just as easily be made using silicon and wire as living neurons and dendrites.

Called artificial neural networks (ANNs), these programs work in the same way as the brain's neural network. An artificial neuron has a number of connections or inputs. To mimic a real neuron, each input is weighted with a fraction between 0 and 1. The weight indicates how important the incoming signal for that input is going to be. An input weighted 0.4 is more important than an input weighted 0.1. All of the incoming signals' weights are added together and the total sum equals the net value of the neuron.

Each artificial neuron is also given a number that represents the threshold or point over which the artificial neuron will fire and send on the signal to another neuron. If the net value is greater than the threshold, the neuron will fire. If the value is less than the threshold, it will not fire. The output from the firing is then passed on to other neurons that are weighted as well. For example, the computer's goal is to answer the question, Will the teacher give a quiz on Friday? To help answer the question, the programmer provides these weighted inputs:

The teacher loves giving quizzes = 0.2.

The teacher has not given a quiz in two weeks = 0.1.

The teacher gave the last three quizzes on Fridays = 0.3.

The sum of the input weights equals 0.6. The threshold assigned to that neuron is 0.5. In this case, the net value of the neuron exceeds the threshold number so the artificial neuron is fired. This process occurs again and again in rapid succession until the process is completed.

If the ANN is wrong, and the teacher does not give a quiz on Friday, then the weights are lowered. Each time a correct connection is made, the weight is increased. The next time the question is asked, the ANN will be more likely to answer correctly. The proper connections are weighted so that there is more chance that the machine will choose that connection the next time. After hundreds of repeated training processes, the correct neural network connections are strengthened and remembered, just like a memory in the human brain. This is how the ANN is trained rather than programmed with specific information. A well-trained ANN is said to be able to learn. In this way the computer is learning much like a child learns, through trial and error. Unlike a child, however, a computer can make millions of trial-and-error attempts at lightning speed.

Whereas traditional AI expert systems are specialized and inflexible, ANN systems are trainable and more flexible, and they can deal with a wide range of data and information. They can also learn from their mistakes. This kind of AI is best for analyzing and recognizing patterns.

Pattern Recognition

Pattern recognition may seem obvious or trivial, but it is an essential, basic component of the way people learn. Looking around a room, a child learns the patterns of the room's layout and recognizes objects in the room. People know that a pencil is a pencil because of the pattern the pencil presents to them. They learn and become familiar with that pattern. Learning a language is actually the activity of learning numerous patterns in letters, syllables, and sentences. The first ANN prototype, Perceptron, created in the 1950s, was trained to perform the difficult task of identifying and recognizing the letters of the alphabet. Today more sophisticated ANNs are also capable of finding patterns in auditory data. ANN software or programs are used to analyze handwriting, compare fingerprints, process written and oral language, and translate languages.

Incorporated into expert systems, ANNs can provide a more flexible program that can learn new patterns as time goes on. They are especially useful when all the facts are not known. These programs have proven more reliable than humans when analyzing applications for credit cards or home mortgage loans. An ANN system can find patterns in data that are undetectable to the human eye. Such tracking is called data mining, and oftentimes the outcome is surprising. For example, the sophisticated computers that keep track of Wal-Mart's sales discovered an odd relationship between the sale of diapers and the sale of beer. It appeared that on Friday nights the sales of both products increased.

Some police departments use an ANN search engine called Coplink to search multiple case files from different locations and criminal databases to find patterns to seemingly unrelated crimes. Coplink helped catch the two snipers convicted of a string of shootings in the Washington, D.C., area in 2002.

The Chicago police department uses another ANN program called Brainmaker to predict which police officers would be more likely to become corrupt and not perform their job according to the oath they took upon becoming an officer. Another software product called True Face recognizes human faces by comparing video images to thousands of stored images in its memory in spite of wigs, glasses, makeup, or bad lighting.

Breeding Programs

Another model of machine learning is based on the biological system of genetics, in which systems change over time. Introduced by John Henry Holland at MIT in the 1950s, this kind of system uses genetic algorithms. An algorithm is simply a step-by-step process of solving a specific problem. An algorithm comes in many forms, but usually it is a set of rules by which a process is run. In a genetic system an algorithm is made up of an array of bits or characters, much like a chromosome is made up of bits of DNA. Each bit is encoded with certain variables of the problem or with functions used in solving the problem. Just as a gene would carry specific information about the makeup of an organism (blue or green eyes), a bit might select for functions of addition or subtraction.

A Commonsense Computer

According to expert Marvin Minsky, as quoted in the Overview of Cycorp's Research and Development , the one problem with artificial intelligence is that "people have silly reasons why computers don't really think. The answer is we haven't programmed them right; they just don't have common sense." Common sense allows a person to make assumptions, jump to conclusions, and make sound decisions based on common knowledge.

Doug Lenat took up the challenge and created Cyc, a program named after the word encyclopedia . For more than twenty years, Lenat and his team at Cycorp has fed the Cyc system with every bit of knowledge that a typical adult would or should know; for example, that George Washington was the first president, that plants perform photosynthesis, that it snows in Minnesota, that e-mail is an Internet communication, and that beavers build dams. The idea behind this long-term project is that Cyc will be able to make reasonable assumptions with the knowledge it has been given. For example, if a person said that he or she had read Melville, Cyc might assume that the person had read the author Herman Melville's most famous book, Moby Dick .

In traditional AI, algorithms would be programmed into a system to perform the same task over and over again without variation. But in a genetic system the computer is given a large pool of chromosomes or bit strings encoded with various bits of information. The bit strings are tested to see how well they perform at the task at hand. The algorithms that perform the best are then bred using the genetic concepts of mutation and crossover to create a new generation of algorithms. Mutations occur by randomly flipping the location of bits on the chromosome. Mutations can bring about drastic random change that may or may not improve the next generation. Crossover occurs when two parent programs trade and insert fragments from one to the other. This ensures that groups of bits or genes that work well together stay together.

With each change, the procedure creates new combinations. Some may work better than the parent generation and others may not. Those algorithms that do not perform well are dropped out of the system like a weak fledgling from a nest. Those that perform better become part of the breeding process. Genetic programs can be run many times, creating thousands of generations in a day. Each time bits of information are changed and the set of rules are improved until an adequate solution to the problem is found.

The kinds of problems that genetic algorithms are most useful at solving involve a large number of possible solutions. They are fondly called traveling salesman problems, after the classic example of a salesperson finding the shortest route to take to visit a set number of towns. With five towns to visit, there are 120 possible routes to take, but with twenty-five towns to visit, there are 155 × 10 23 (or 155 followed by 23 zeros) possible routes and finding the shortest route becomes an almost insurmountable task.

Such a task is a common one in real life. It is a problem for airlines that need to schedule the arrivals and departures of hundreds of planes and for manufacturers who need to figure out the most efficient order in which to assemble their product. Phone companies also need to figure out the best way to organize their network so that every call gets through quickly.

Genetic algorithms are also used to program the way a robot moves. There are an almost infinite number of potential moves, and genetic algorithms find the most efficient method. After all of the genetic algorithms have evolved, the genetic program produces an overall strategy using the best moves for certain situations. The best performing programs are then taken out of the robots and reassembled to produce a new generation of mobile robots.

In the future, these genetic systems may even evolve into software that can write itself. Two parent programs will combine to create many offspring programs that are either faster, more efficient, or more accurate than either of the two parents.

Logic and Fuzzy Logic

Regardless of the technique that is used to perform certain AI functions, most software is created using complex mathematical logic. A common symbolic logic system used in computer programming was developed in the mid-1800s by mathematician George Boole, and many search engines today use Boolean operators—AND, NOT, and OR—to logically locate appropriate information. Boolean logic is a way to describe sets of objects or information. For example, in Boolean logic:

Strawberries are red is true.

Strawberries are red AND oranges are blue is false.

Strawberries are red OR oranges are blue is true.

Strawberries are red AND oranges are NOT blue is true.

Boole's logic system fit well into computer science because all information could be reduced to either true or false and could be represented in the binary number system of 0s and 1s—with true being represented by a zero, and false by the number one, or vice versa.

Computers tend to view all information as black or white, true or false, on or off. But not everything in

life follows the strict true-false, if-then logic. In the 1960s mathematician Dr. Lotfi Zadeh of the University of California at Berkeley developed the concept of fuzzy logic. Fuzzy logic systems work when hard and fast rules do not apply. They are a means of generalizing or softening any specific theory from a crisp and precise form to a continuous and fuzzy form.

Programs that forecast the weather, for example, deal in fuzzy logic. A fuzzy logic system understands air temperature as being partly hot rather than being either hot or cold, and the temperature is expressed in the form of a percentage. Fuzzy logic programs are used to monitor the temperature of the water inside washing machines, to control car engines, elevators, and video cameras, and to recognize the subtle differences in written and spoken languages.

Alien Intelligence

Although traditional expectations of artificial intelligence are to duplicate human intelligence, author and computer specialist James Martin believes that that expectation is unrealistic. He believes that in the future AI should more properly be called alien intelligence, because the way computers "think" is vastly different from the way a human thinks.

AI is faster and has a larger capacity for storage and memory than any human. The largest nerves in the brain can transmit impulses at around 90 meters per second, whereas a fiber optics connection can transmit impulses at 300 million meters per second, more than 3 million times faster. A human neuron fires in one-thousandth of a second, but a computer transistor can fire in less than one-billionth of a second. The brain's memory capacity is some 30 billion neurons, while the data warehouse computer at Wal-Mart has more than 168 trillion bits of storage with the capacity to grow each year. Such a computer can process vast amounts of data that would bury a human processor and can quickly find patterns invisible to the human eye. The logical processes that some systems go through are so complex that even the best programmers cannot understand them. These computers, in a sense, speak a language that is understood only by another computer. Martin suggests that AI researchers of the past, who predicted a robot in every kitchen, promised more than could be delivered. But what they did create is much more than anyone could have dreamed possible.

Comment about this article, ask questions, or add new information about this topic: